Artificial Intelligence for Security Operations: From Copilots to Agents

AI for security operations uses large language models and autonomous agents to automate threat detection, alert triage, and incident response across Security Operations Centres (SOCs). Security copilots such as Microsoft Security Copilot assist analysts in real time, while agentic platforms including n8n and CrewAI enable fully automated security workflows.

The shift from rule-based automation to large language model-driven agents represents the most significant change in how Security Operations Centres handle detection and response since the introduction of Security Information and Event Management (SIEM).

How Has AI Changed Security Operations So Far?

Artificial Intelligence (AI) is changing how Security Operations Centres (SOCs) function - reducing the time analysts spend on manual alert triage and enabling faster, more consistent responses to threats at scale. As organisations and industries continue to grow, the requirement for speed and scale is on everything including cyber security.

AI has seen a revolution in terms of growth and interest, what sparked the initial interest was the advent of ChatGPT and the mainstream concept of Large Language Models (LLMs). Whilst these earlier versions and models are miles away from the level we often see today, they took the world by storm, writing code, performing in-depth analysis and sometimes reasoning through complex tasks.

Due to the sensitive nature of cyber security, the mainstream adoption of ChatGPT and similar offerings did not launch as well as anticipated. The need for confidential 'chats' meant that security teams were limited in the ways they could adopt such technology. The operational impact of this gap was significant - according to the IBM Cost of a Data Breach Report 2024, organisations using AI and automation in security operations identified and contained breaches an average of 98 days faster than those without.

With the availability of models growing and software offering the ability to "self-host" your LLMs in a self-managed environment, organisations could utilise LLMs in a more controlled fashion. During which time, the launch of Microsoft Security Copilot was announced, marking one of the first key chat-based and security focused solutions to hit the mainstream market.

Microsoft Security Copilot offered a unique promise in that Microsoft had trained this version of Copilot on security-specific data, drawing on more than 100 trillion daily signals processed across Microsoft's global threat intelligence infrastructure. This meant that organisations could now submit security events and alerts to then have conversations about further investigation guidance, response steps and strategic risk mitigation advice.

In the CrowdStrike world, Charlotte AI also launched, offering very similar capabilities. Charlotte AI uses CrowdStrike's Falcon platform telemetry to provide natural-language alert summaries and suggested containment actions directly within the analyst console, enabling teams to query detections in plain English and receive recommended next steps without leaving the platform.

The industry identified several critical requirements:

- Training data

- Better models

- A more automated mechanism versus chatting

- Better prompting techniques

- Ability to be autonomous

What Are AI Agents in Cybersecurity?

The hype of "agentic AI" became mainstream. In the security industry, this meant for a another iteration of Security Orchestration, Automation and Response (SOAR), as defined in Gartner's SOAR market category, with the promised ability to create workflows of LLMs, providing them instructions and programmatic input, connections to tools via APIs and file systems.

A growing number of platforms make it straightforward for organisations to get started with AI agents. n8n and CrewAI, for example, offer low-code workflow builders with the following capabilities:

- Bring your own model

- Self host

- Test with version control

- Connect tools via APIs

- Connect knowledge to prime the LLM

- Perform Retrieval-Augmented Generation (RAG) operations during the workflow runtime. In a SOC context, RAG allows the agent to query a vector-embedded knowledge base of internal runbooks and threat intelligence feeds at runtime, grounding its responses in organisation-specific data

In our experience implementing SOAR for clients, the recurring barrier was the time investment required to build and maintain playbooks, rather than the technology itself. Gartner has noted that SOAR deployments frequently fail to achieve projected automation rates, with integration complexity and ongoing playbook maintenance cited as primary barriers. AI Agents represent the next evolution, providing simpler design and implementation process by removing the dependency on rigid, hand-authored rules.

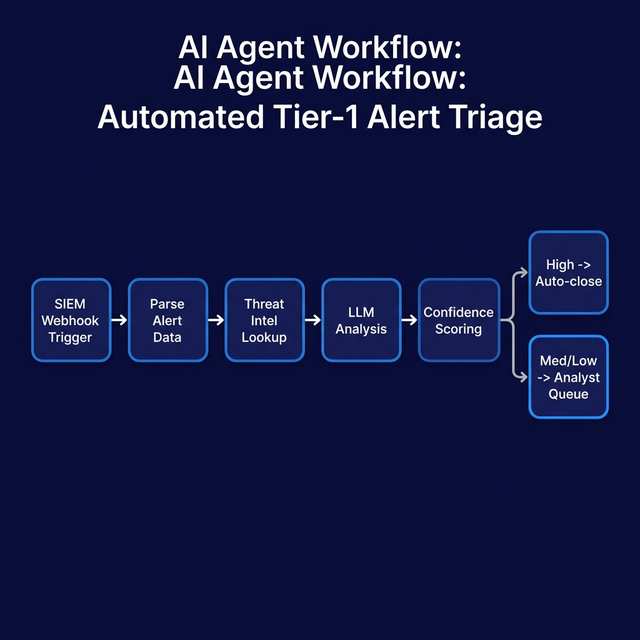

In our deployments at Precursor Security, we have used n8n with a locally hosted language model to automate Tier-1 alert triage, ingesting detections from the SIEM via webhook, enriching each alert with threat intelligence lookups, and returning a confidence-scored recommendation to the analyst queue. In one deployment, analysts reported spending significantly less time on repetitive triage tasks within the first four weeks of the workflow going live, with a material reduction in alerts requiring human escalation.

SOAR vs. Security Copilots vs. AI Agents: A Comparison

| Dimension | SOAR | Security Copilot | AI Agents |

|---|---|---|---|

| Implementation complexity | High - requires lengthy rule-building and API integration | Medium - SaaS onboarding, prompt-driven | Low-to-medium - workflow builders (n8n, CrewAI) reduce code overhead |

| Typical cost | High licence + professional services | Included in Microsoft 365 E5 (400 SCUs/month per 1,000 seats); additional capacity at $4/SCU/hour | Low, especially with self-hosted open models (e.g. DeepSeek's reasoning model) |

| Autonomy level | Rule-based automation only | Analyst-directed, no autonomous action | Fully autonomous with human-in-the-loop option |

| Human involvement | High - rules must be maintained | High - analyst drives every query | Variable - can operate without human input |

| Example platforms | Splunk SOAR, Palo Alto XSOAR | Microsoft Security Copilot, Charlotte AI | n8n, CrewAI, LangChain |

| Primary use case | Playbook execution, alert routing | Analyst augmentation, natural-language investigation | Autonomous triage, enrichment, response |

What Is Next for AI in Security Operations?

A significant topic across the industry is the emergence of DeepSeek's reasoning model, whose API costs are approximately 89-96% lower than GPT-4o per million tokens - materially lowering the barrier to deploying autonomous security agents at scale. For security teams specifically, self-hosted deployments of open-weight models reduce costs further still, and sidestep the data sovereignty concerns that make sending sensitive SOC telemetry to a third-party API endpoint a difficult sell.

The broader AI for security operations market reflects that momentum. MarketsandMarkets projects the global AI in cybersecurity market to reach $60.6 billion by 2028, growing at a CAGR of 21.9%. That growth is being driven not by experimental pilots but by security teams demonstrating measurable triage efficiency gains, and by the compounding cost savings of replacing rules-based SOAR infrastructure with lighter, model-driven workflows.

Agentic AI will continue to advance, providing lower barriers for adoption through workflow building platforms like n8n. AI agents will enable security teams to perform basic tasks such as:

- Alert quality review

- Analysis of events for recommendations

- Creation of basic SIEM queries

- Trending of alerts

For security teams evaluating where to start, the most practical entry point is a human-in-the-loop agent that handles Tier-1 alert enrichment - ingesting events, querying threat intelligence, and surfacing a confidence-scored recommendation for analyst review. This limits risk, builds institutional familiarity with agentic workflows, and creates a measurable baseline for expanding automation over time.

To see more about what Precursor Security are doing with Artificial Intelligence, Large Language Models and Agentic AI, sign up to our upcoming webinar here: https://marketing.precursorsecurity.com/webinar-ai-in-a-soc/